In the age of AI, the real question is no longer just whether an answer is correct, but whether we are capable of knowing if it is correct. When a response is fluent, structured, and confident, it creates an illusion of authority. This makes it difficult to distinguish between a genuine insight and a well-crafted error. The line between a model’s hallucination and a user’s limitation is thinner than most people realize.

A hallucination, in the context of AI, is not random nonsense. It is often a highly plausible, internally consistent response that simply does not correspond to reality. These outputs arise because models generate language based on probability rather than verified truth. They are trained on patterns, and the result is language that often sounds right — because it is statistically consistent with what has been seen before, not because it has been validated. This is why even well-structured explanations can be fundamentally flawed, especially where factual grounding is weak or ambiguous. The danger lies precisely in this elegance, which disarms skepticism — a sweet poison that often prevails over the discipline required to face a bitter truth.

At the same time, users often operate outside their domain of knowledge. In these situations, the issue is not necessarily the quality of the answer, but the absence of the foundation required to evaluate it. Without strong — sometimes even elementary — knowledge, validation becomes impossible. A correct answer and an incorrect one can look identical to someone who lacks the tools to tell them apart.

This problem becomes worse when flawed assumptions go unchallenged. If a question is built on an incorrect premise, the response may still appear logical while inheriting that error. Without deliberate effort to test assumptions, both the model and the user can reinforce a misunderstanding instead of correcting it.

In educational contexts where advancement is sometimes disconnected from mastery, individuals may progress without developing the knowledge required for effective validation. The corrective is procedural: when using AI, one should explicitly request references, underlying assumptions, and verifiable sources to ground each response. At the same time, queries must be scoped to what can realistically be understood and tested, expanding progressively rather than jumping into domains where validation is impossible. AI can assist in the construction of understanding, but it cannot substitute the process required for mastery. Used properly, it functions as a scaffold — supporting learning, structuring reasoning, and enabling verification. Used improperly, it becomes a crutch that replaces thought. The risk is not created by AI, but amplified when outputs are accepted without the means — or the intent — to test them.

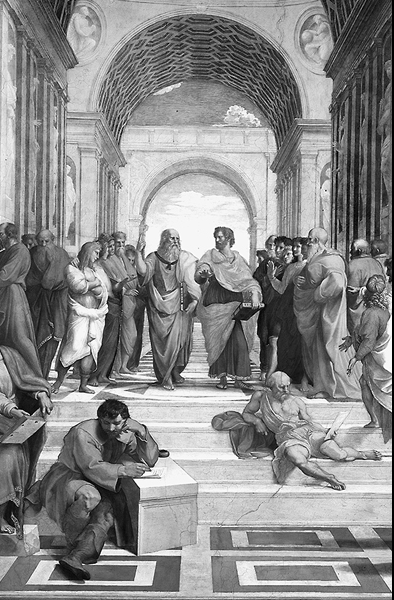

Ultimately, the real danger is not the hallucination itself, but the habit of unverified acceptance. As Friedrich Nietzsche warned in his own way, comfort often disguises itself as truth, and what feels convincing is not necessarily what is real. Long before that, Plato described prisoners mistaking shadows for reality, while Aristotle argued that knowledge begins with the discipline of questioning appearances. AI does not make people wiser or more ignorant—it simply accelerates the path they are already on. The difference is not in the machine, but in whether one chooses to remain among the shadows or step outside the cave and test what is seen.

Leave a Reply

You must be logged in to post a comment.